- Home

- Guardian DevOps

- Negative TTL in AWS Cloudfront

How to configure negative TTLs for your S3-backed Cloudfront distribution with Samuell

I’m on the og-aws Slack group, one of the more active groups of AWS developers and cloud practitioners. A member of the channel, Samuell, asked a question about S3, Cloudfront, and new files, and I saw the perfect opportunity to help out, so I offered. Here is the initial request he posted in the Slack channel:

Samuell: When iam using S3 and CloudFront do i need to update somehow CDN to see new files or this should work fine for new files? And second question i have custom errors but they dont work it shows every time s3 error… only issue i have now with error pages it shows every time AccessDenied if file is missing not a custom error page that i defined in CDN

Samuell had two problems, whether apparent in the original message or not. First, new items uploaded to S3 would take a while to show up in Cloudfront. Second, Cloudfront’s default 404 page is terrible (his phrasing, we’ll see why I point that out later). We’ll address the first of these problems in this post, and the second in another post. But first, a little more about Samuell.

Tabs vs Spaces: Spaces

Favorite IDE: Visual Studio Code

Current OS: Windows 10

iPhone vs Android: Android

Favorite Superhero: I don’t know

Twitter Handle: @sam_uell1

Context

The CMS, October CMS, is hosted in Samuell’s production AWS account. It allows for file uploads, which it stores in S3.The S3 files are delivered over SSL by a single Amazon Cloudfront web distribution with standard settings.

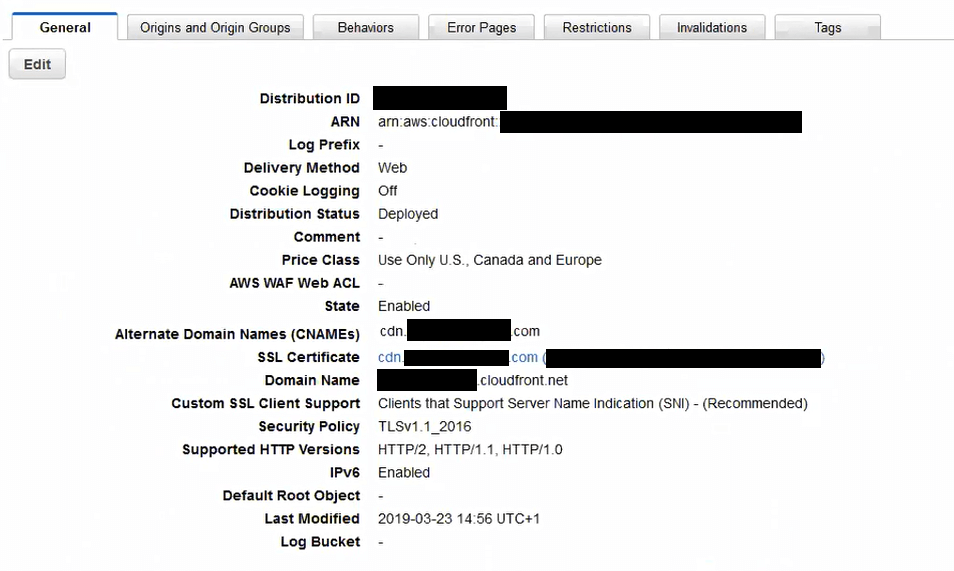

Standard Web Distribution configuration, including CNAME, TLS, and price class

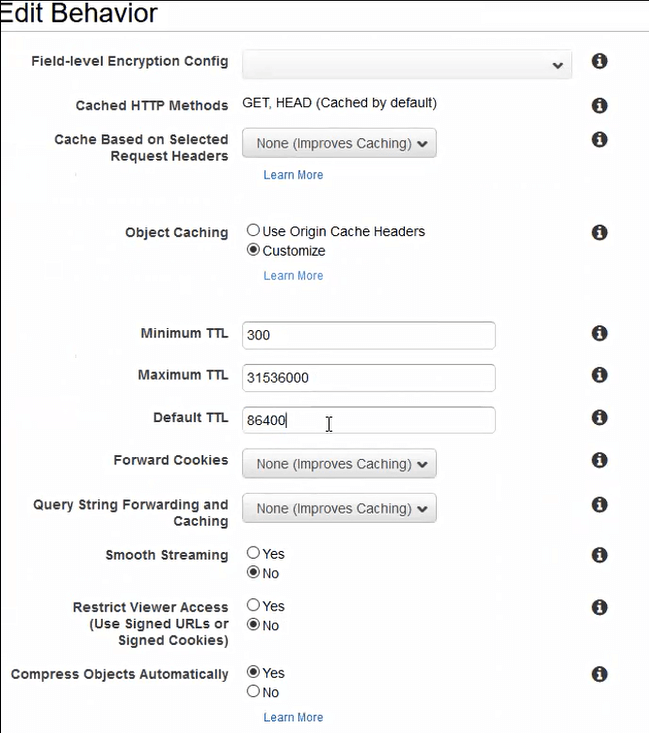

The distribution has a single, simple behavior for all URLs.

There is a min TTL enforced on all items, and the backing for the distribution is a single S3 bucket. It is the correct bucket (verified), and items are showing up correctly.

Symptoms

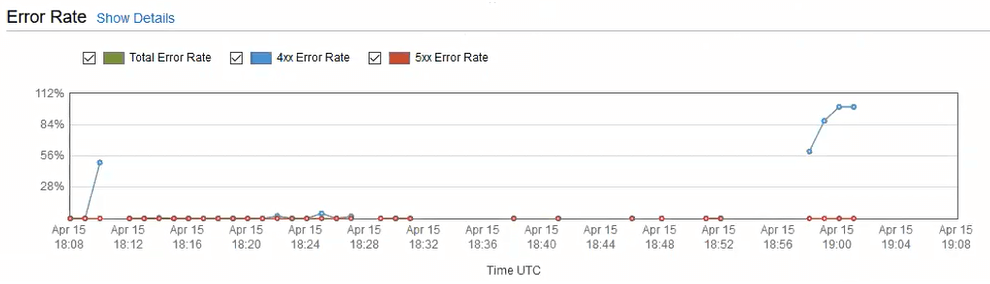

When users upload CDN assets through the CMS, some percentage of them fail to display properly. Instead of showing the correct image, stylesheet, etc, Cloudfront returns the standard S3 XML 403 Forbidden response. This is typical for S3, as showing a 404 is a potential security concern. Waiting a few minutes always solves the problem. During the waiting period, a spike in 4xx errors would occur at the CDN edge.

Spike in 4xx errors every time an object gets in this weird state. Drops off after about 5 minutes.

In the screenshare, the exact proportion of newly uploaded items that caused problems was unclear, but it was enough to show in the Cloudfront analytics, as seen in the graph above.

Problem

The underlying problem is that S3 doesn’t work like you think it would. S3 is eventually consistent, meaning you can upload a file, get a confirmation of upload, immediately request the file, and S3 will tell you the file doesn’t exist. S3 uses this technique to increase throughput. There is nothing you can do about S3’s data consistency model. The Amazon S3 documentation indicates:

Updates to a single key are atomic. For example, if you PUT to an existing key, a subsequent read might return the old data or the updated data, but it will never return corrupted or partial data.

So, S3 is the original source of the 403 error, even after a file has been uploaded through the CMS. The reason why it doesn’t go away for 5 minutes is due to the default configuration of negative TTLs Cloudfront.

When a distribution’s origin (S3 in this case) returns a response, Cloudfront’s job is to cache the response in accordance with the Cache-Control headers on the meta information. In the case of an error, like 403 Forbidden, Cloudfront uses configuration called “Negative TTLs” to determine the correct caching behavior. The default for all 4xx and 5xx errors is exactly 5 minutes. This behavior helps with DDoS attacks and stampede effect. From the Amazon Cloudfront documentation:

By default, when your origin returns an HTTP 4xx or 5xx status code, CloudFront caches these error responses for five minutes.

The real problem is in the combination of the S3 consistency model plus the default negative TTL from Cloudfront. Either, without the other, would be fine. Together, they can play tricks on unsuspecting DevOps engineers.

I’ve also found this to be the case with CORS in S3 and Cloudfront — individually, they work fine; together, there’s a bug. That’s a story for another day, though.

Solution

While there’s nothing we can do about the S3 eventual consistency, Cloudfront does allow changes to the negative TTL configuration. Since this is a fairly common, hard-to-solve problem, I made a YouTube video to explain the problem and solution. I’ll also explain the fix with screenshots below.

There are two levels of fixing this S3/Cloudfront issue. The first fix, which works perfectly, but is near impossible to enforce, is to never request assets from Cloudfront until they are guaranteed to be there in S3. The second fix, which minimizes errors, but doesn’t eliminate them, is to set the negative TTL really low.

Enforcing assets to be created by S3 before ever requesting from Cloudfront is impossible. Confirming the readability first wouldn’t even do it — S3 has many servers, and it may have become readable on one and not the others. And even if it was readable on all servers, someone may have requested that URL from Cloudfront by accident, and you’d never know.

Setting the negative TTL really low doesn’t fix the problem. You’ll still have missing files and 403 Forbidden errors. The fix is to make them appear after 5 seconds (a single refresh) instead of 5 minutes. Ideally, the only person looking at the files in the first 5 seconds is the editor of the page, not random users.

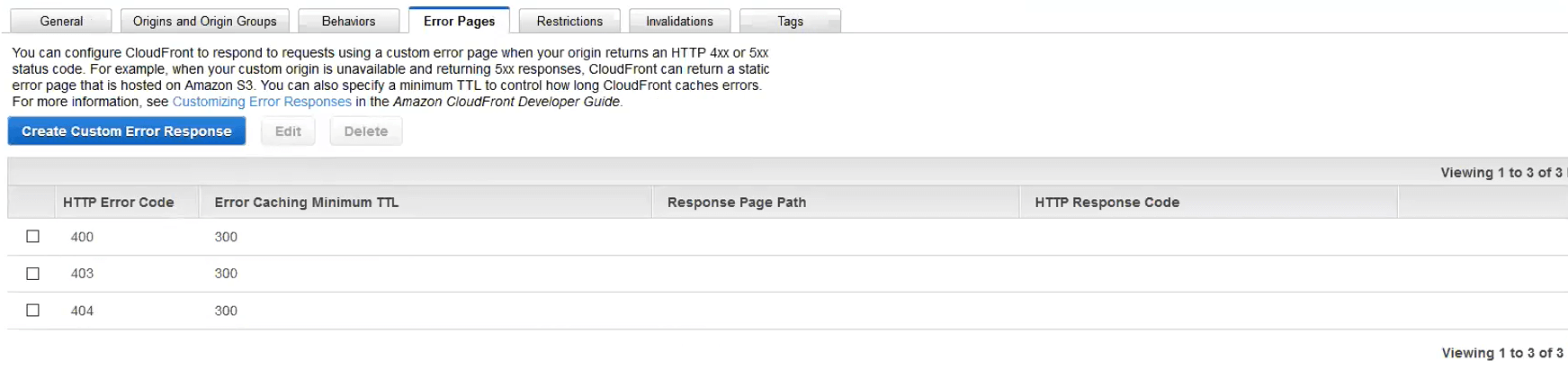

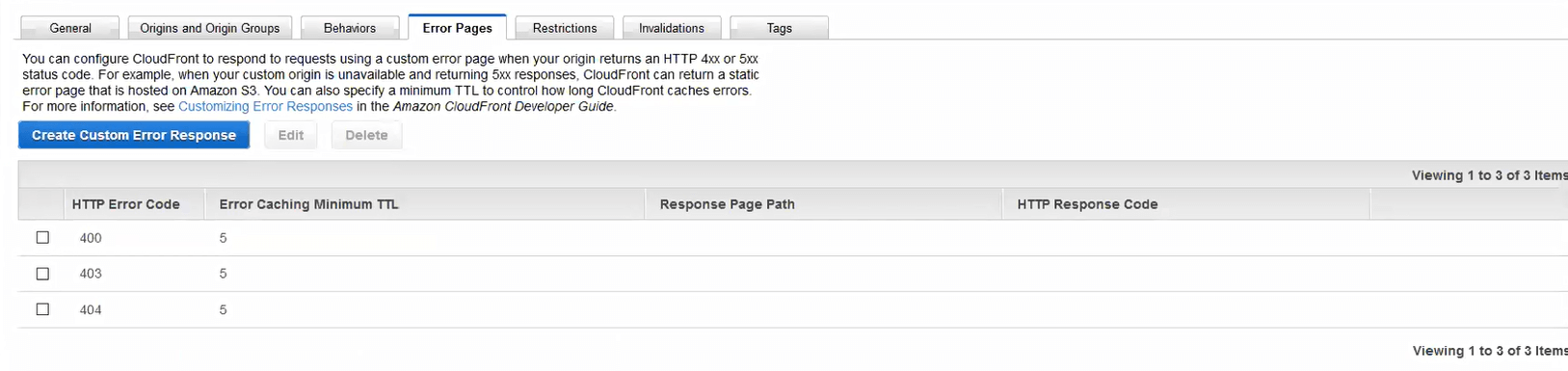

I recommended to Samuell that he set the negative TTLs really low, and he did. To set the negative TTL really low, open Cloudfront, edit your distribution, and click the “Error Pages” tab. Unless you’ve already set up custom error pages or negative TTLs, your list will be empty. Samuell already had a couple configurations on the page.

Samuell’s configured Error Pages with 5 minute TTLs

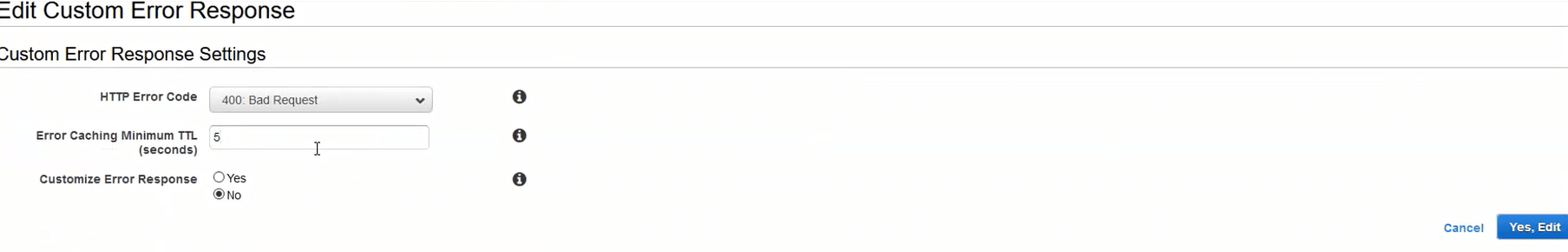

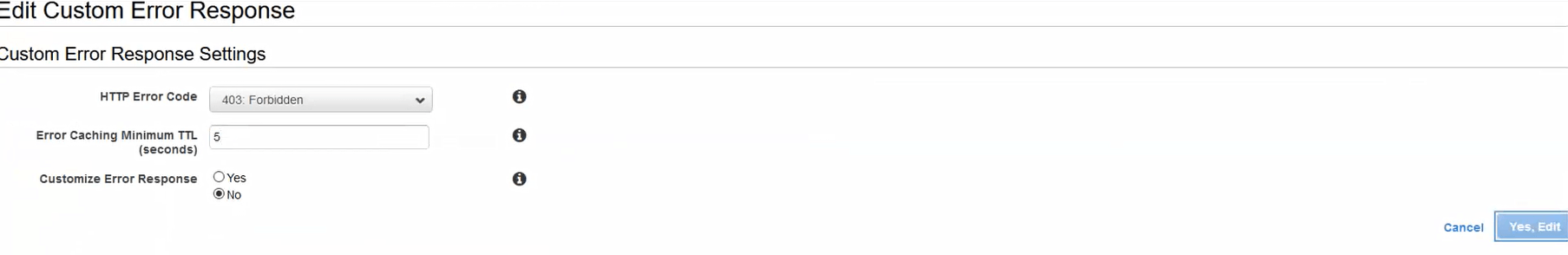

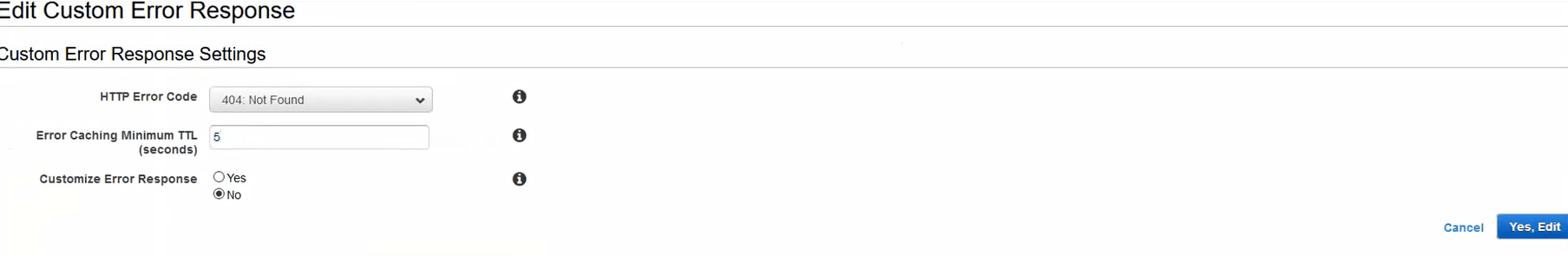

For Samuell, the fix would be to edit each rule he has and lower the TTL. Your action may be different, which I’ll address below. Samuell clicked edit on each individual rule, changed the TTL, and clicked “Yes, Edit” to save the change.

Changing the negative TTL on 400 Bad Request to 5 seconds

Changing the negative TTL on 403 Forbidden to 5 seconds

Changing the negative TTL on 404 Not Found to 5 seconds

403 Forbidden, as well as other existing rules, each with 5 second negative TTLs in Cloudfront

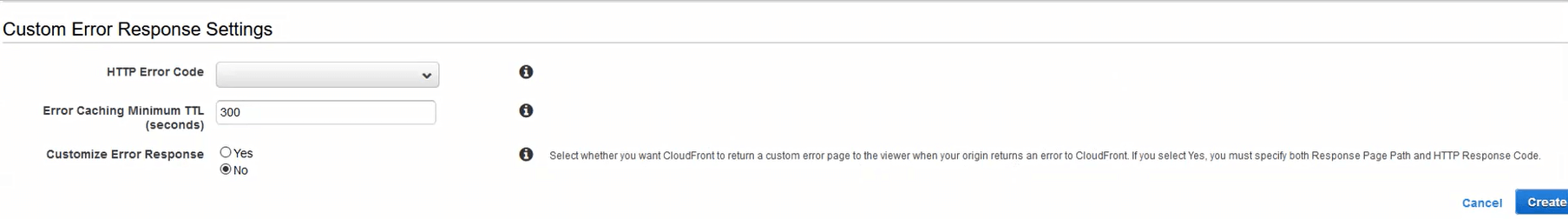

For you, you’ll “Create Custom Error Response” for the 403 Forbidden response code. You won’t need to add the 400 or 404 response codes, because S3 doesn’t return them on a missing file, it returns 403 Forbidden. When you create the new response, you don’t have to customize the error response, leaving it “No” means you’re only modifying the negative TTL.

Creating a new custom error response

Once you have modified your distribution, changes take about 15 minutes to propagate. Once they’re done, test your changes with the following steps:

- Pick a URL of a file that doesn’t exist yet (simulates the timing of S3’s eventual consistency)

- Navigate to the Cloudfront version of the URL in your browser, confirm 403 Forbidden

- Upload a file (like an image) to the matching URL in S3

- Wait 5 seconds

- Navigate to the Cloudfront version of the URL again, confirm file exists and is delivered properly

Learnings

S3 is eventually consistent. In just about every file system in the world, even NFS, a written file exists. In S3, that’s not necessarily the case, though it will be a majority of the time. Plan for missing items with retries and applicable failure messages.

By default, Cloudfront caches missing items for 5 minutes. This is primarily a concern when using S3 as the origin, but can also be an issue during releases if the origin is your application. The benefit of the 5 minute negative TTL is DDoS protection and quick error messages. The drawback is that new resources don’t work immediately.

Negative TTLs exist. Caching in Cloudfront, DNS, the JVM, and a whole bunch of other tools use negative TTLs to cache the absence or error of an item. You just have to be aware of them.

Credits

Guardian DevOps is a free service that puts you in contact with DevOps and SRE experts to solve your infrastructure, automation, and monitoring problems. Tag us in a post on Twitter @GuardianDevOps, and together we’ll solve your problems in real time. Sponsored by Blue Matador.

Tweet to @GuardianDevOps Follow @guardiandevops

Blue Matador is an automated monitoring and alerting platform. Out-of-the-box, Blue Matador identifies your AWS and computing resources, understands your baselines, manages your thresholds and sends you only actionable alerts. No more anxiety wondering, “Do I have an alert for that?” Blue Matador has you covered.