- Home

- Blog

- Kubernetes

- IAM Access in Kubernetes: The AWS Security Problem

Identity and access management (IAM) in AWS is a way to grant access to AWS services and collect and transmit data and credentials. Most Kubernetes “Quick Start” guides for AWS do not adequately cover how to manage IAM access in your pods. This blog series will first go over the security issues specific to AWS IAM on Kubernetes, then compare solutions, and then we will end with a detailed walkthrough for setting up your cluster with one of those solutions.

| If you are interested in a fully managed solution to monitoring Kubernetes in AWS, check out Blue Matador infrastructure monitoring. Learn more > |

AWS Security and First-Time Kubernetes Setup

At Blue Matador, I am in charge of our production Kubernetes set up. I had a few years experience running microservices on EC2 before using Kubernetes, including managing dozens of IAM policies designed to isolate access to sensitive data to only services that needed it. When it came time to create our first Kubernetes cluster I read dozens of tutorials and patched together a kops installation that met our needs.

Using AWS IAM Roles for Kubernetes Security

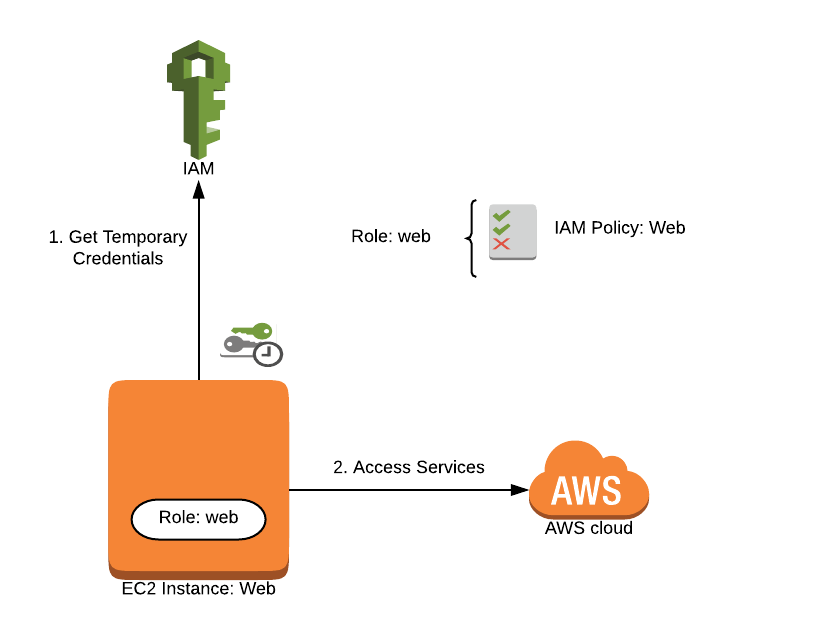

An IAM role is a profile you create in AWS that you can then grant access to services. A role can be used by more than one person or entity.

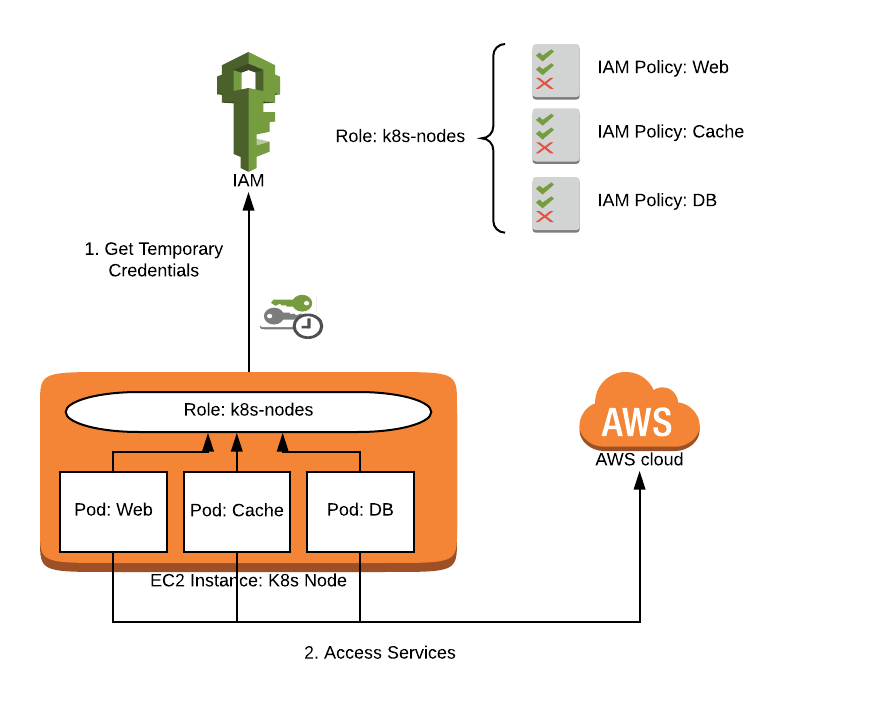

When we began running services in Kubernetes, I immediately ran into issues with IAM permissions. Like many, I solved the issue by attaching IAM policies to the role my Kubernetes nodes used so that the pods could access all of the services via the metadata API. This is a fine solution for very simple setups or non-production clusters, but it exposes your AWS account unnecessarily.

Kubernetes nodes running in AWS will often need access to some services in order to provide value: modifying Route53 records, creating Elastic Load Balancers, and access to the metadata API to help you identify nodes. Every pod running on that node also has access to perform these operations, so consider that every permission you add to the node you are also adding to every pod running on that node. Handling AWS authorization this way is a bad security practice and violates the principle of least privilege.

AWS IAM for pods on EC2 nodes via instance profile

Kubernetes Security and the Principle of Least Privilege

What's the principle of least privilege? In a nutshell, the principle of least privilege means ensuring that only the correct entities have certain permissions, and that unused permissions are not granted. Applied to your Kubernetes cluster, it means that each pod should only have the access it needs to perform its own function.

Once you begin adding third-party pods to your cluster, you should be very worried about their access to your AWS account. Should your fluentd pods be able to delete items in S3? Is Grafana really supposed to have access to your data in DynamoDB? These examples perfectly illustrate the principle of least privilege.

The ease with which we can run things in Kubernetes highlights this issue further. A one-line kubectl command can install very complex systems in your cluster that are capable of anything. Deploying your developer’s code is only too easy with the advent of Docker, and we all know that our devs make mistakes. By restricting every pod’s AWS access, you can sleep easier knowing that a random container can’t delete your entire database without your permission.

In addition to violating the principle of least privilege, using the node IAM role to manage your pod permissions makes it nearly impossible to audit which containers have access to your AWS resources. Using the node role is a bad security practice when configuring IAM access for Kubernetes pods because (1) it violates the principle of least privilege and (2) it is not auditable.

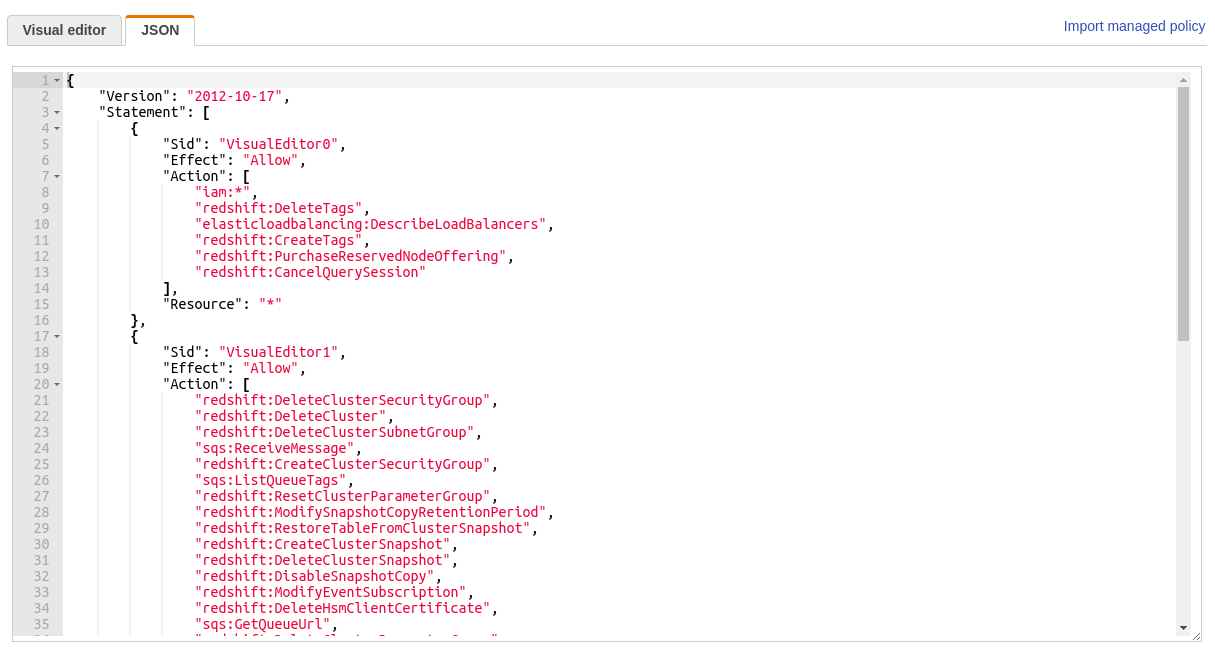

Unmanageable IAM policy

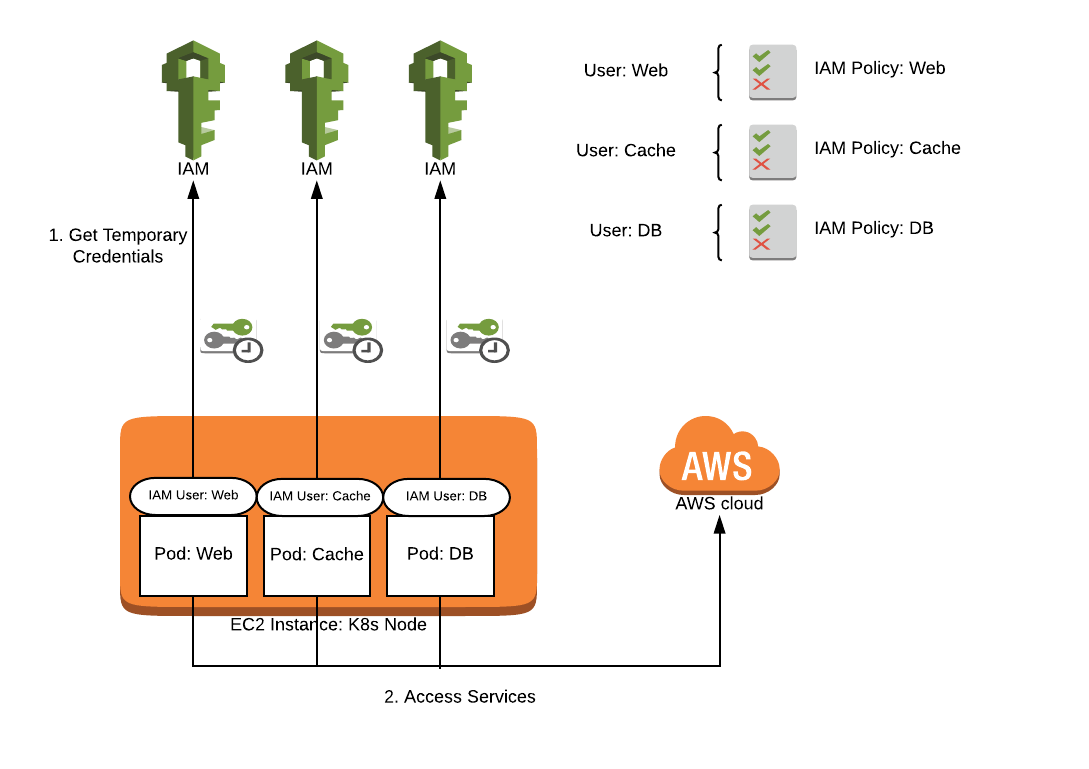

Using IAM Users for Kubernetes Security

An alternative to using the node role is to use IAM users instead. An IAM user is a profile you can set up just like an IAM role, but one that requires a username and password to access certain services and that is meant for one person only. With this method, you can make an IAM user with correct permissions for each of your services, and then pass in the credentials via environment variables. This method does not violate the principle of least privilege because each pod has only the access it requires.

AWS IAM for pods via IAM user credentials

Use Kubernetes secrets to store the credentials and then inject them into the environment variables. Using a secret will get the credentials out of your config files, but secrets are really just base64 encoded. It is easy enough to accidentally give access to read your secrets to random pods, even with RBAC enabled.

env:

- name: AWS_ACCESS_KEY_ID

valueFrom:

secretKeyRef:

name: aws_iam_user_1

key: accessKeyId

- name: AWS_SECRET_ACCESS_KEY

valueFrom:

secretKeyRef:

name: aws_iam_user_1

key: secretAccessKey

Storing credentials this way will make it more difficult to rotate your keys, which is done automatically with IAM roles. Some organizations also have restrictions on IAM users, either preventing users with access keys entirely, or requiring Two-Factor Authentication for all users. Additionally, having access keys available in the pod poses some risks. A developer may accidentally log all environment variables or hardcode access keys to avoid the hassle of managing a secret; either of these could easily expose your keys.

Other Options for IAM in Kubernetes: Kube2iam and Kiam

We should strive to do better than allowing carte blanche access or from storing access keys in a way that could be compromised by another system easily.

In this post, I’ve covered (1) Providing IAM access via the Kubernetes node IAM role and (2) Providing IAM access to individual pods via IAM user credentials. Each of these has their advantages and disadvantages, but neither of them feels like a solution that should be used in a production Kubernetes cluster. There are two projects that are widely used to manage IAM access that are considerably better than both of these methods: kube2iam and kiam. In the next post, I will do a full feature comparison of these two products to try and determine which one is best suited for handling all of your Kubernetes IAM needs.