I set out to find a credit mechanism or hard-coded limit in packets per second in AWS EC2. After all my findings set out in this series so far, I had one more test to perform around t2.unlimited. I wanted to see how “unlimited” it is and the difference it makes in packet throughput on capable instance types. This post is about my findings.

This is the sixth and final post in our series investigating PPS measurements in Amazon EC2. Here are the other posts in the series:

- How many packets per second in Amazon EC2? (introduction)

- EC2 packets per second: guaranteed throughput vs best effort

- Packets per second in EC2 vs Amazon’s published network performance

- Payload size doesn’t affect max PPS limit on AWS

- PPS spike every 110 seconds on EC2

- Using t1/t2/t3 unlimited to increase packet limitations (this post)

Unlimited Mode is Management of Burstable Resources

When you use t2.unlimited, it doesn’t actually mean that you have unlimited resources. Instead, it changes what happens when you exhaust your burst credits. Instead of being throttled, unlimited mode creates extra charges to your AWS account. From the AWS doc page on Unlimited Mode:

A burstable performance instance configured as unlimited can burst above the baseline for as long as required. This enables you to enjoy the low instance hourly price for a wide variety of general-purpose applications, and ensures that your instances are never held to the baseline performance.

You can think of unlimited mode as an “unlimited burst” mode, where more bursting costs you more money, but you can burst as much as your application requires.

There are many burstable resources in AWS. GP2 and magnetic EBS volumes have burstable capacity and certain instance types have burstable CPU. From the same AWS documentation page as above:

T3 instances are launched as unlimited by default.

T2 instances are launched as standard by default.

In contrast, Dynamo has an upper threshold that, when reached, induces throttling. Dynamo, however, does not have burstable capacity, so Dynamo does not have an unlimited mode.

In my test on PPS in EC2, I specifically wanted to test CPU on T2 instances, which are burstable, measured in CPU credits, and capable of unlimited mode.

PPS Test on t2.medium was CPU Bound

It’s important to know that the restricting resource in the PPS test on my t2.medium was the CPU. If it was network bound, then increasing the CPU burst capacity would have no effect — unlimited mode on T2 instances controls the burstable CPU, not memory, network, or any other resource.

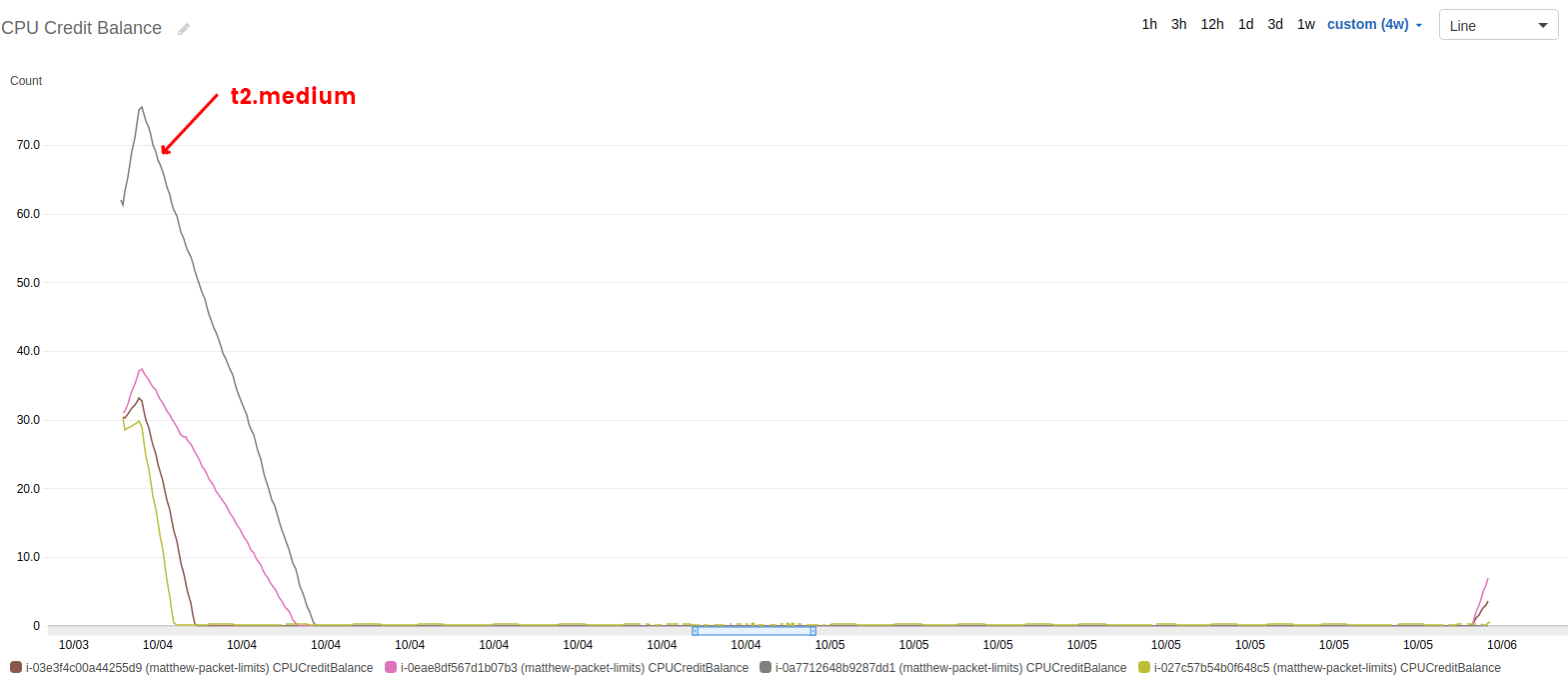

Let’s turn to one of the graphs I showed earlier in the series. I would like to show a graph of just the t2.medium, but Cloudwatch threw away all my data weeks before it should have. Nevertheless, the graph still shows that all my T2 instances used all their CPU credits early on in the testing period. The graph covers the entire, multi-day period of the test.

The CPU credit balance for the t2.medium instance (i-0a7712648b9287dd1) is highlighted and is exhausted within hours of beginning the test.

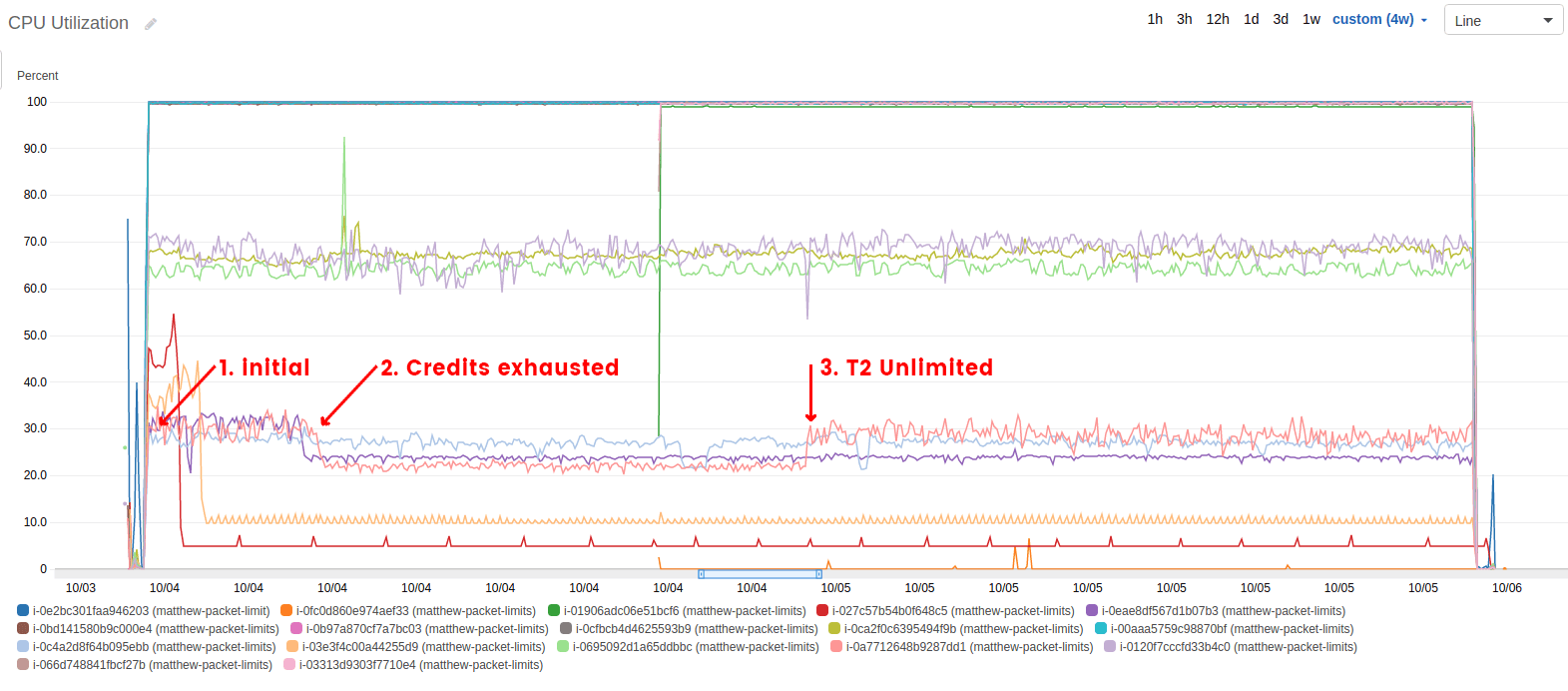

In my test, as in most applications or scripts, once the t2.medium ran out of CPU credits, it became CPU bound and began reporting a lot of steal time. AWS doesn’t like to show steal time, because it makes them look bad, so it’s not reflected in the next graph of CPU utilization. While you can’t see steal time, I’ve highlighted three interesting line segments:

-

Initial CPU usage. While the instance still had CPU credits, this is the max value of recorded CPU. It’s not 100%, but that’s because Cloudwatch doesn’t report CPU the same way `top` does.

-

Exhausted credit usage. After all the CPU credits had been used, this is the max value of recorded CPU.

-

Unlimited mode CPU usage. Enabling unlimited mode on CPU burst, the CPU spiked back up to near its original, unthrottled value.

CPU Utilization on the t2.medium drops from 30% to 20% when the CPU credits are gone, and then jumps back to 30% when unlimited mode is enabled.

Using the combination of these graphs, it’s easy to see that our t2.medium was being throttled on CPU for the test, and that unlimited mode did have an effect on CPU usage. The remaining question is the effect unlimited mode has on PPS during this time.

Effect of Unlimited Mode on t2.medium PPS

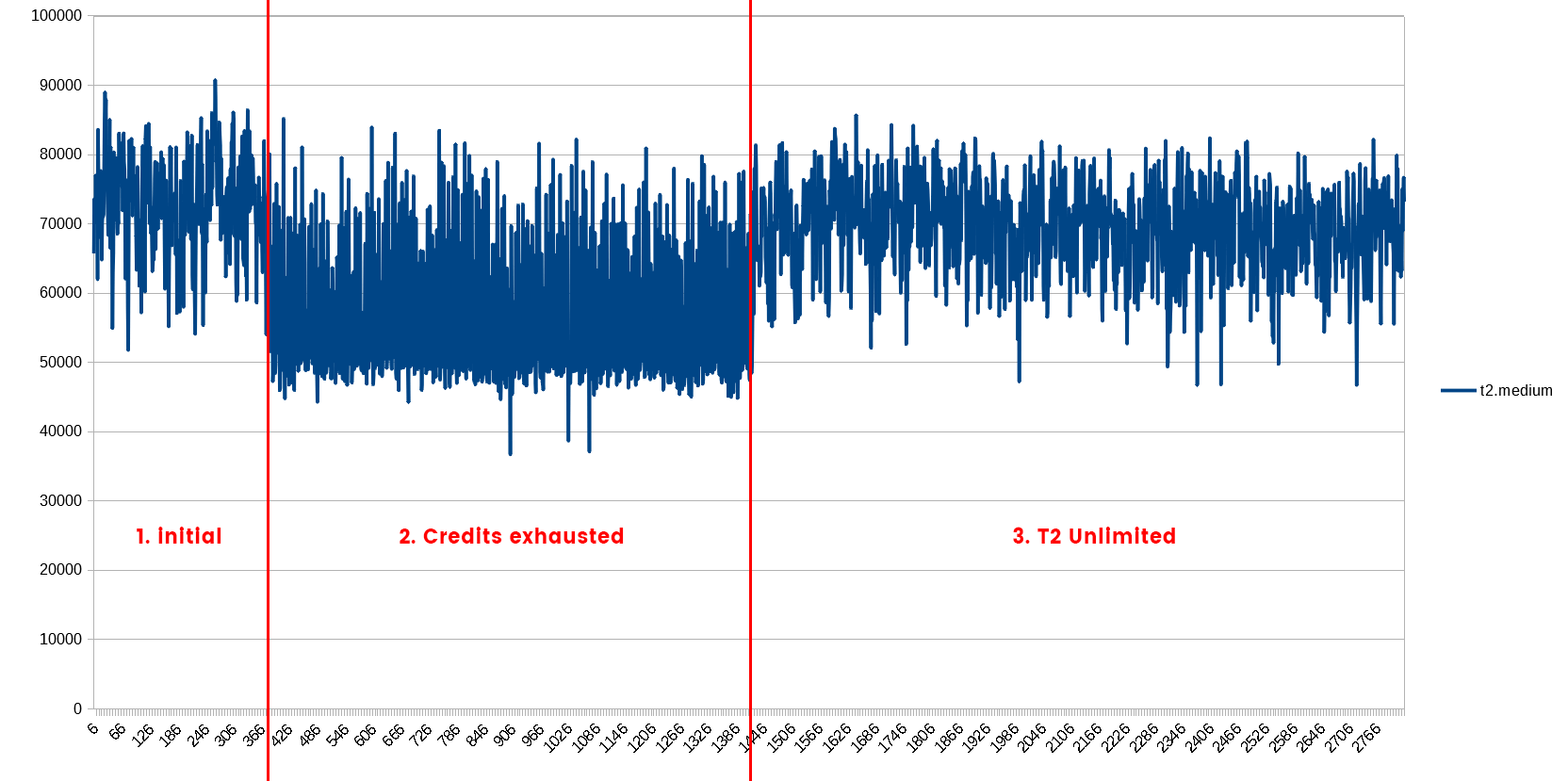

The packets per second on the t2.medium, in a not so surprising reveal, follow the CPU utilization patterns. After the CPU credits are exhausted, PPS drops. Once unlimited mode is enabled, the PPS increases again.

What I found interesting was the direct comparison of initial PPS to the unlimited mode value. Before running the test, I expected the unlimited mode to be higher than the initial (it is “unlimited” after all). After seeing that wasn’t the case, my next expectation was that the initial values and unlimited mode values would very comparable, if not almost identical. Wrong again.

PPS on the t2.medium during the same time frame as the previous graphs. Each data point is the average of 60 one-second samples taken during the test.

What I saw, and have now confirmed through simple math, is that the PPS on the initial mode was 4.8% higher than the PPS on the unlimited mode. As far as packets on the network are concerned, it’s measurably better to not rely on the unlimited mode feature!

Before the CPU was throttled, the average rate of packets per second was 73,193. During throttling, the average was 56,631 (22.7% less than initial). During the unlimited, the average was 69,719 (4.8% less than initial). The standard deviation was 7,168 for initial, 9,107 for throttled, and 6,220 for unlimited.

Now, in all fairness, I should point out three things related to the disparity between initial and unlimited modes:

-

it could be the lack of data points prior to the CPU getting throttled. I had little control over that number while running the test at maximum capacity.

-

Somewhat related to #1, both averages are within a single standard deviation of the other. The whole disparity could be a statistical runoff.

-

The statistics quoted above don’t take into account the guaranteed throughput and best effort mechanisms we discovered in previous posts.

Let’s be honest, though. Nobody would choose to use t2.medium if they relied heavily on the burst capacity. If that were the case, most people would just upgrade to a c5 or m4 instance. Relying on unlimited mode is for people who want to use the smallest possible instance size while minimizing the risk of performance-based outages.

End of PPS Benchmarking Series

I hope you enjoyed reading about my network benchmarking as much as I enjoyed creating, running, and reporting on them. Stay tuned for another series about ELB and ALB statistics on pre-warming and scale-up time.

In the meantime, you can subscribe to our blog updates email on our blog home page. Also, take a look at our proactive monitoring solution that DevOps experts use to find the unknown in their AWS infrastructures.